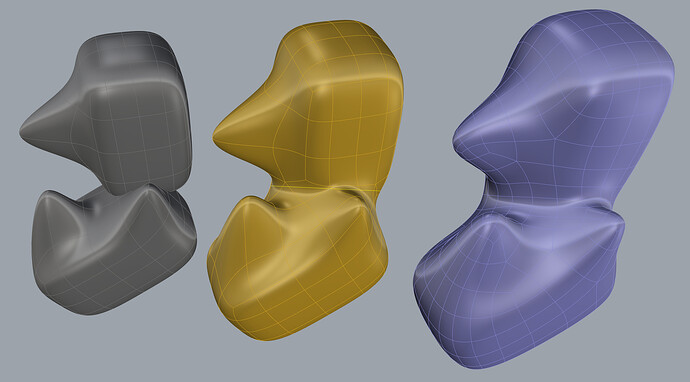

Yes it means just that, you can look for YouTube videos for Retopo and you will see that Blender, Modo, Maya, Topogun, 3Dcoat, Zbrush all do it, fairly well, for organic things like characters, nature pieces, etc. I think Rhino could have a leg up in this space eventually fo rethink type of work you do.

The LIDAR in the next iPhone, if similar to the current iPad Pro is fantastic for getting depth information for AR placement of objects, or like they showed yesterday for the new iPhone Pro: detect foreground from background elements for focus on a camera, etc. So it’s basically a camera helper, and sure, you can get the rough dimensions of a room or a window (in those cases AI helps them assume straight lines to complete the missing information). But for the work we do? I don’t think it will be very useful. Your manual ruler/T-square/calipers would to a much better job. Maybe it could be good for a very organic form of a very large casting or something like that.

Just look at the resolution of the iPad’s LIDAR here (dots caught with infrared camera):

Here’s a scan of a show with the iPad Pro’s LIDAR:

Thai is the shoe it was trying to scan:

The only possibility where I can see this scanner being useful is if Apple includes the depth information of photos in their AppleRaw photo format. And they with a bunch of those a photogrammetry program could potentially do a much better job than just using flat images. Yet, IF Apple includes that info, someone has to code that into a good app. A lot of IFs for a $9.99 app with limited market appeal IMO.

Best,

G